Memory as a Decorator

Adding memory to agentic workflows has never been straightforward. One of the main challenges is the lack of a standardized framework for building these workflows. We started with LangChain, then watched as a wave of new frameworks emerged — each slightly different, each fragmenting the ecosystem further. At one point, it was feasible for a memory provider to tightly integrate with a single framework. That's no longer the case.

So we decided to rethink the problem entirely.

A Simpler Approach to Agentic Memory

Instead of forcing users into rigid systems or complex integrations, we focused on what people actually wanted:

Seamless memory integration into any custom agentic workflow.

The solution we landed on is intentionally simple:

That's it. One line.

A decorator that automatically captures LLM interactions and turns them into structured, reusable memory.

No complex setup. No need to rethink your architecture. No requirement to adopt a specific framework.

Why a Decorator?

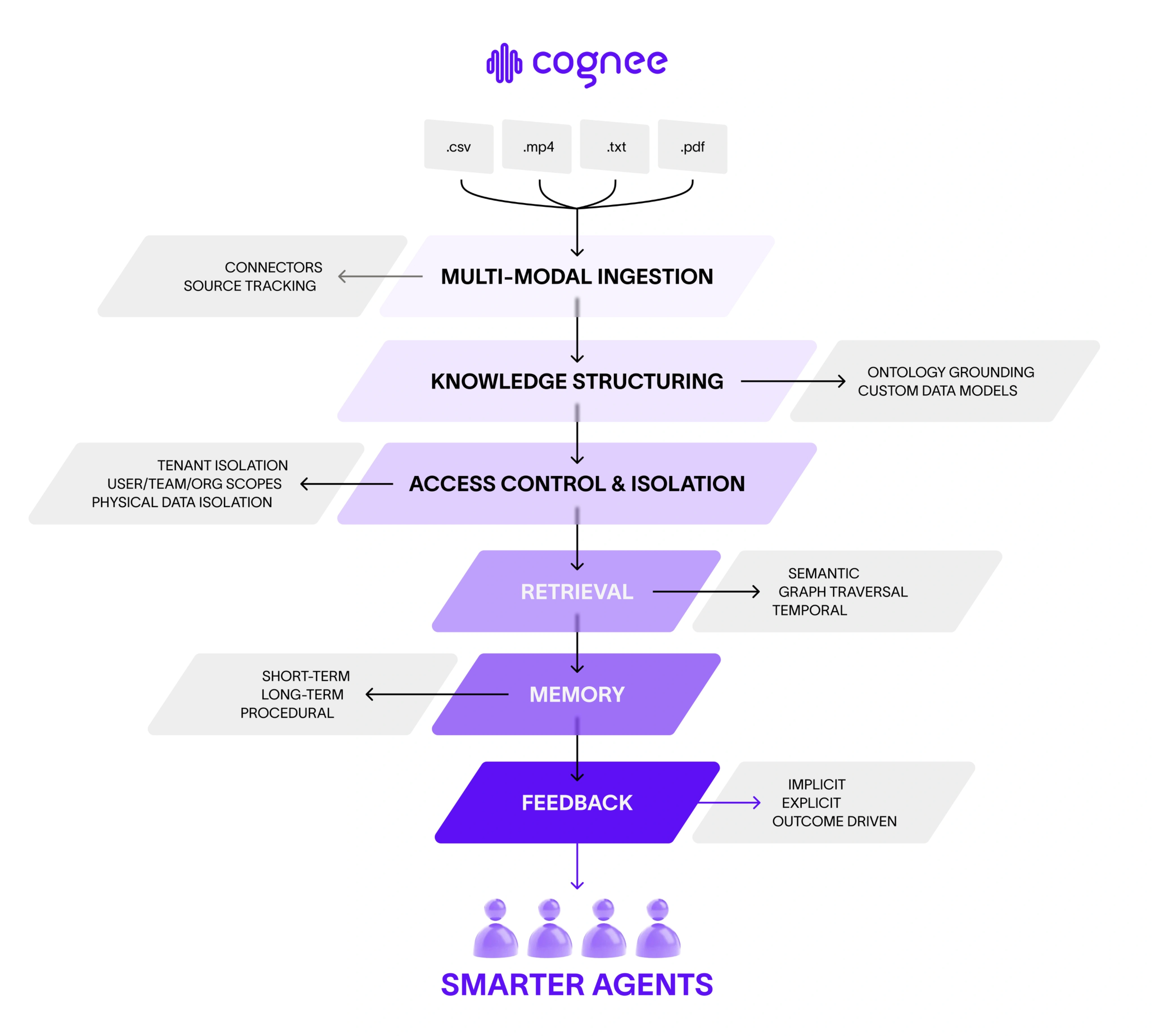

Our original vision for Cognee was to build a highly customizable, open-source system. Something modular enough to serve everyone — from solo developers experimenting with AI agents to large enterprises like pharmaceutical companies needing custom ontologies for research and discovery.

We built components like:

- Ontology mapping

- Memory systems

- Retrieval pipelines

- Feedback loops

- Data access control

But we noticed a pattern: many users didn't want to think about infrastructure. They just wanted memory to work — immediately.

So we asked ourselves:

How do we make agentic memory accessible to everyone, regardless of expertise?

The answer was clear:

Make it one line.

With a decorator, you don't need to worry about where memory belongs in your workflow. You don't need to restructure your system or rely on specific agent frameworks. You just add it — and it works.

Putting It to the Test

To validate this approach, we designed an experiment.

Setup

We built a simulated sales environment:

- A sales agent pitching six core features:

- Multimodal ingestion

- Knowledge structuring

- Access control

- Retrieval

- Memory

- Feedback loops

- 198 simulated customer leads

- 6 distinct buyer personas

Each lead had a hidden profile, including:

- A must-have feature

- Preferred messaging style

- Objection behavior

- A deal-breaker

The sales agent had two conversation rounds to identify the customer's needs and close the deal.

Strategies Compared

We tested three approaches:

- No Memory (Baseline) — every interaction starts from scratch.

- Context Stuffing — past conversations are appended into prompts and summarized as needed.

- Cognee Memory (Decorator-Based) — structured knowledge graph memory, automatically captured via the decorator.

How Conversations Worked

Each interaction was a back-and-forth between two agents:

Sales Agent receives:

- Current conversation

- Customer message

- Feature catalog

- Optionally, memory from past interactions

Customer Agent has a hidden profile and evaluates each pitch as interested, skeptical, or ready to buy.

Outcome rules: deal closes → Win. No close after 2 rounds → Loss.

After each interaction, the decorator automatically stores a structured memory trace, and the agent queries past insights before the next conversation.

Results

| Metric | No Memory | Context Stuffing | Cognee Memory |

|---|---|---|---|

| First-pitch accuracy | 49% | 60% | 78% |

| Win rate | 90% | 91% | 97% |

| Tokens used | 353K | 928K | 597K |

Key Takeaways

- Context stuffing improves performance — but at a massive token cost (2.6× higher).

- Cognee memory significantly boosts accuracy while remaining efficient.

- Structured memory outperforms raw text accumulation.

While context stuffing may be "good enough" in some cases, it becomes inefficient and costly at scale.

What Makes Cognee Different

Traditional approaches treat past conversations as plain text. Cognee treats them as knowledge.

Instead of storing:

"Sales conversation with startup CTO. Outcome: won. Winning pitch: feedback framed as developer experience."

Cognee extracts relationships like:

startup_cto → pitched_with → feedbackfeedback → framed_as → developer_experiencestartup_cto → closed_with → feedback

Now, when a similar customer appears, the agent doesn't search for similar text. It asks:

"What actually worked for this type of customer?"

And gets a precise, structured answer.

From Memory to Learning

This is the core shift:

- Not just storing context

- Not just retrieving text

- But learning from experience

Cognee transforms conversation logs into a queryable knowledge graph, enabling every new interaction to benefit from past ones.

The agent doesn't just remember. It learns.

Try It Yourself

Install Cognee, add the decorator, and see how it performs in your own workflows:

Have questions or ideas? Want to share what you're building? Join the community on Discord and connect with others exploring agentic memory.

Memory as a Decorator

Cognee's CLI Replaces MCP OAuth in 100 Lines

Agents Don't Need Another Protocol. They Need a Good CLI.

Memory as a Decorator

Cognee's CLI Replaces MCP OAuth in 100 Lines