Structure Your Skills with Cognee

TL;DR: Instead of treating agent skills as loose markdown files, this experiment uses Cognee's add-cognify-search pipeline to turn them into a structured knowledge graph. Skills get enriched with task patterns, routed by semantic similarity and historical success, and improved over time through a feedback loop. The result: smarter skill routing that actually learns.

Skills are a useful abstraction for AI agents.

In most systems, a skill is just a folder with a SKILL.md file that tells the agent when to use it, what steps to follow, and what output to produce. That works well when you only have a few skills like summarization, code review, or data extraction.

The problem starts when the collection grows.

Once you have dozens of skills, agents often fall back to scanning a lot of markdown, doing rough keyword matching, or re-planning from scratch every time. Routing gets noisy. Planning gets slower. And the system does not learn from what actually worked before.

That is the idea behind this graph-skills experiment in Cognee.

Instead of treating skills as loose markdown files, we treated them as structured graph objects inside Cognee’s core flow: add, cognify, search.

1. Add: ingest the skills

The first step is to ingest a skills folder.

The parser reads each SKILL.md, pulls out frontmatter and instructions, and also scans bundled resources like references and scripts. Each skill gets a stable identity, metadata, and content hashes so the system can later detect what changed.

So the input is still the same friendly SKILL.md format. But under the hood it becomes something machine-usable, not just text sitting in folders.

2. Cognify: enrich and connect them

After parsing, Cognee uses structured LLM extraction to enrich each skill.

It generates:

- a cleaned-up description

- an instruction summary

- trigger phrases

- tags

- complexity level

- candidate task patterns the skill can solve - TaskPattern

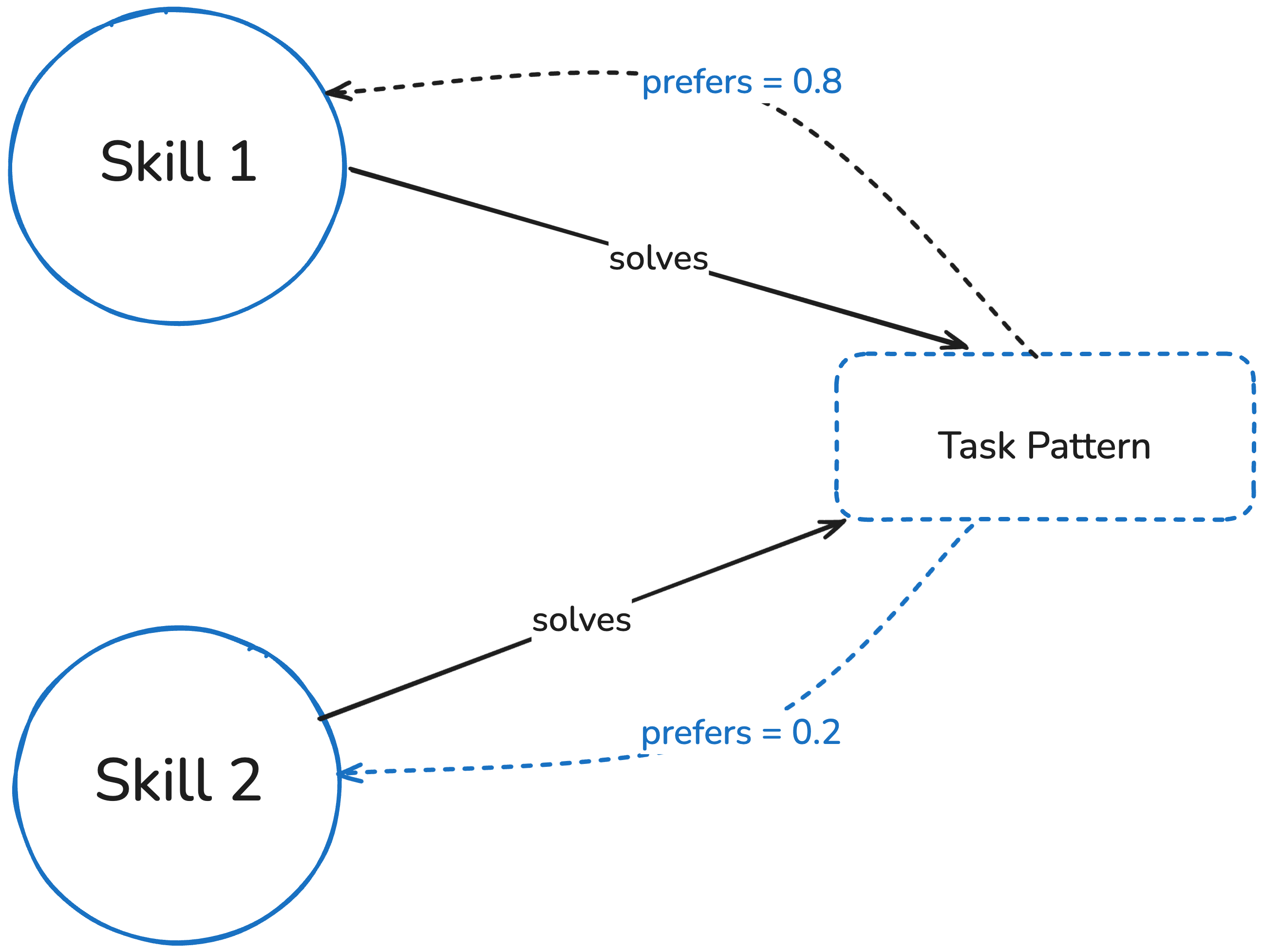

Then those task patterns are materialized as their own graph nodes. Skills connected to them solves relationships.

This is the key shift: the system is no longer storing “documents about skills.” It is building a knowledge graph of skills, intents, and relationships.

Check this example where we ran this skill repo here.

3. Search: route by meaning and by history

When a new task comes in, routing is not just “find the closest keyword match.”

The retrieval layer does three things:

- semantic search over skill summaries

- semantic search over task-pattern descriptions

- graph lookup of learned preferred edges from past runs

The final ranking blends semantic similarity with historical success for similar task patterns.

So the router is answering a better question: not “which skill looks similar to this prompt?” but “which skill tends to work for this kind of task?”

That is a much better fit for real agent systems.

4. Learn from feedback: observe and promote

After a skill is used, the system records the outcome with observe(): the task, the selected skill, how well it worked, and optional signals like errors or tool failures.

Those runs first go into short-term memory through Redis-backed sessions. Then promote() moves the important runs into the long-term graph and updates the preference weights between task patterns and skills. In other words, short-term execution traces become long-term routing memory.

Both successes and failures matter. Success teaches the router what to trust more. Failure teaches it what to avoid next time.

That makes the whole loop:

ingest -> route -> execute -> observe -> promote -> route better next time

Why Cognee is a good fit

This works because it leans into Cognee’s main value proposition:

- graph + vector together, not one or the other

- LLM enrichment to turn raw text into structured meaning

- Feedback driven routing

- custom Cognee implementations — this is not just using the default pipeline; custom models, tasks, and pipelines make it possible to treat skills, task patterns, and runs as first-class graph objects

So you keep the simple authoring experience of SKILL.md, but add retrieval, routing, and learning on top.

There are also some practical pieces that matter:

- upsert() skips unchanged skills and only reprocesses changed ones

- skills can be removed cleanly from graph and vector storage

- the graph can be visualized

There is even a meta-skill for skill routing itself, so the system can teach an agent how to use the system: ingest, route, load, execute, observe, promote.

How to try it

If you want to test it yourself:

- install cognee

- set LLM_API_KEY

- point it at a folder of SKILL.md files

- ingest the skills

- query for the best skill for a task

- record the result

- promote the run so routing improves

You can use the system through Python, the CLI, or MCP in tools like Cursor and Claude Code, either as a skill router that picks the best SKILL.md workflow for a task or as a memory layer that makes those skills searchable, reusable, and learnable over time.

The full source code is available on GitHub: cognee/skills. The fastest way to try it is to run python -m cognee.skills.example, which executes the entire ingest-route-execute-observe-promote loop and generates a graph visualization.

The bundled example skills are adapted from Agent-Skills-for-Context-Engineering by @koylanai.

Final thought

Skills are useful. A pile of skills is not.

Stay tuned… More coming soon!!

Cognee's CLI Replaces MCP OAuth in 100 Lines

Agents Don't Need Another Protocol. They Need a Good CLI.

Claude Code's Leak Reveals Anthropic's Obsession with Cognee

Cognee's CLI Replaces MCP OAuth in 100 Lines

Agents Don't Need Another Protocol. They Need a Good CLI.